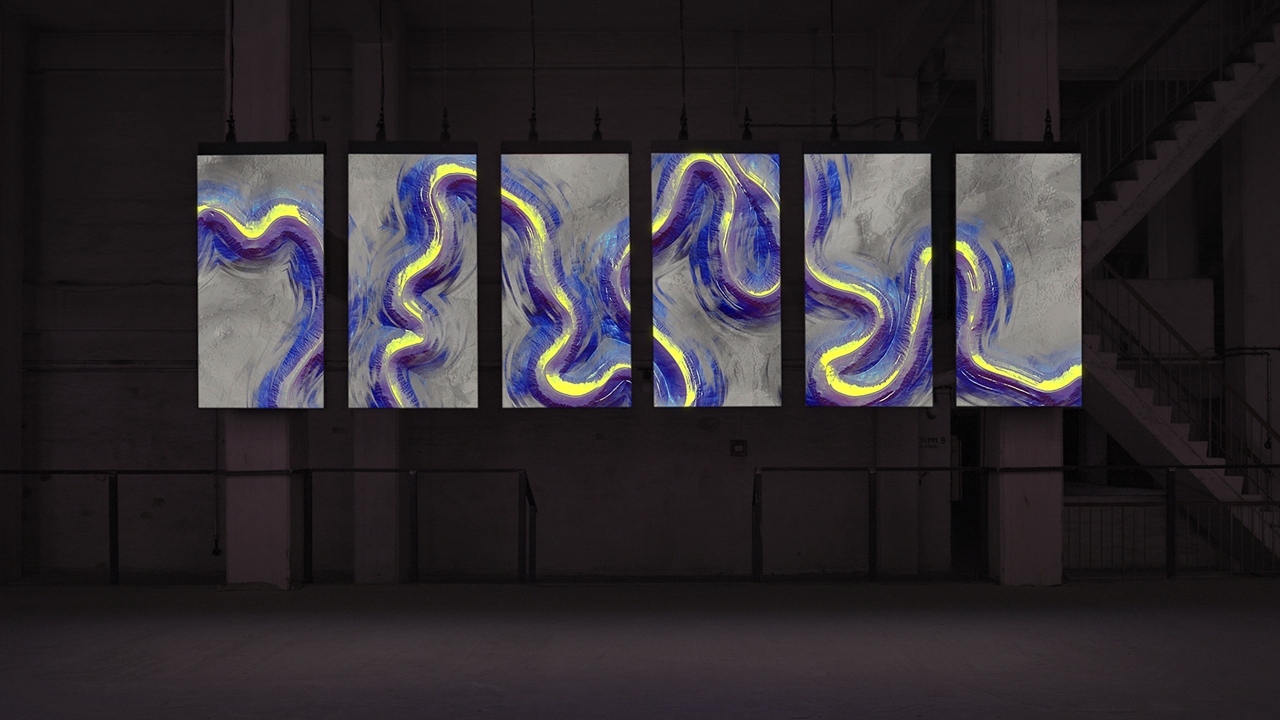

»Meandering River« is an audiovisual installation comprised of real-time visuals generated by an algorithm and music composed by an A.I. This digital artwork makes change perceivable by creating a unique awareness of time. Spanning over multiple screens, the piece reinterprets the shifting behaviors of rivers by visualizing and sonifying their impact on the surface of the earth.

Meandering River

audiovisual art installation

2018

Over time, landscapes are gradually shaped by natural forces. Indiscernible to the naked eye, we only perceive one moment at a time. The fluctuations and the rhythmic movement of rivers are a glimpse into the past, as traces provide evidence of the constant transformations that surround us.

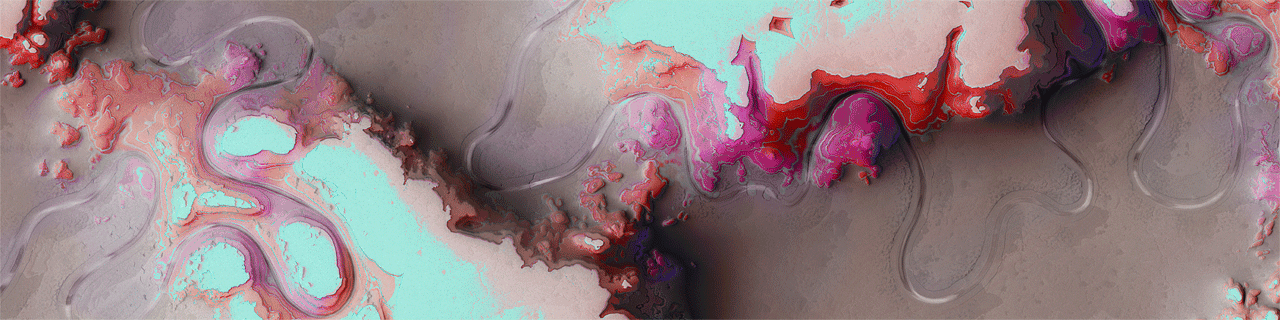

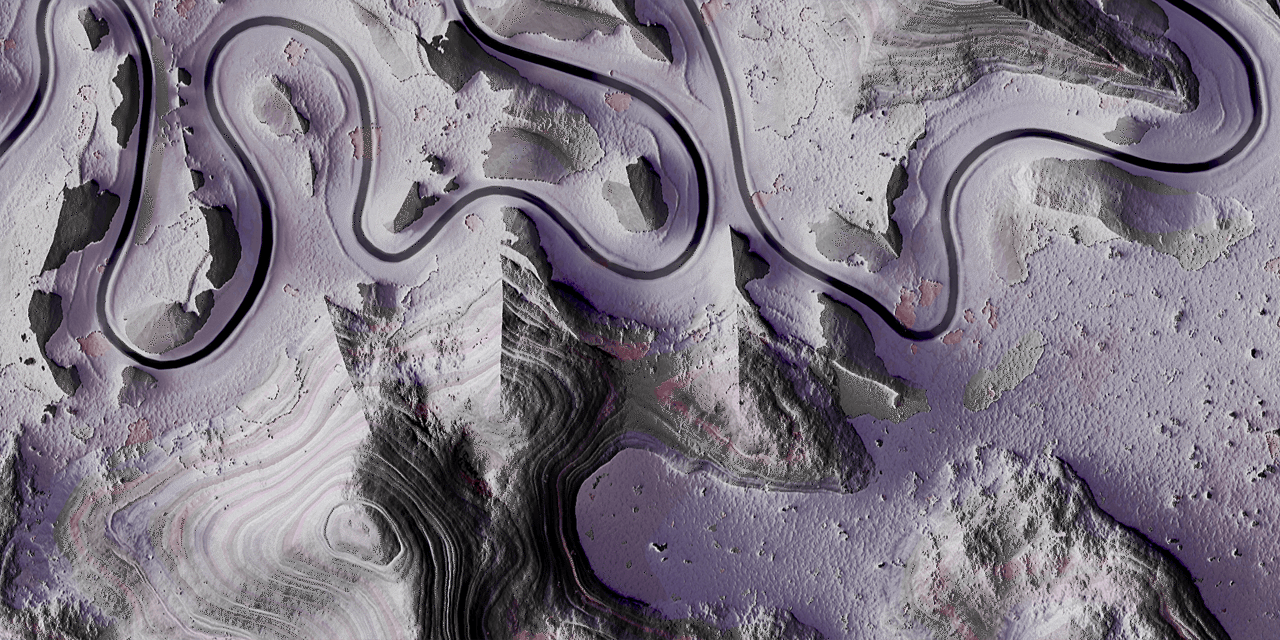

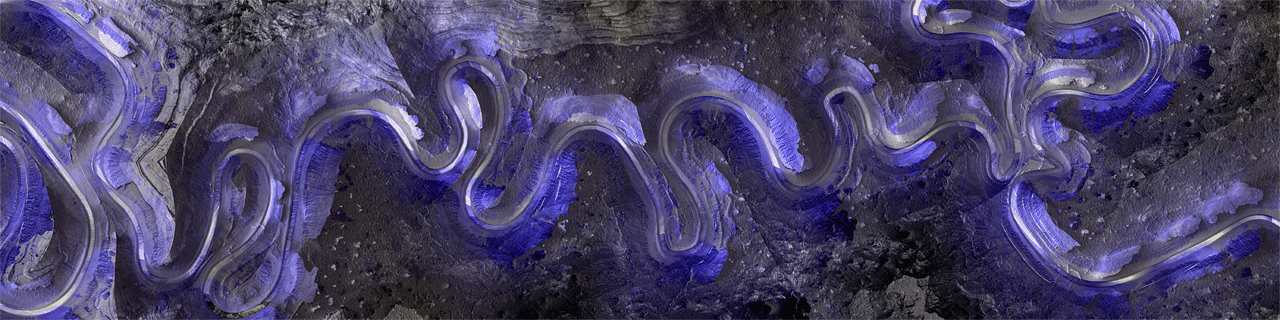

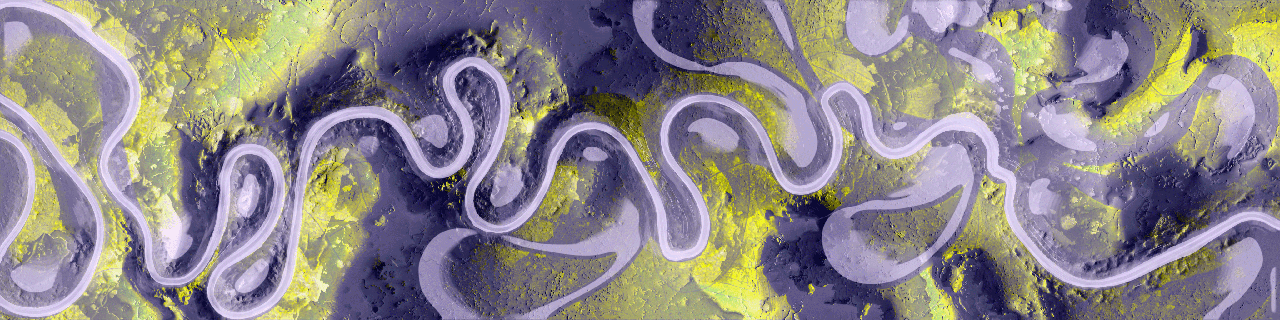

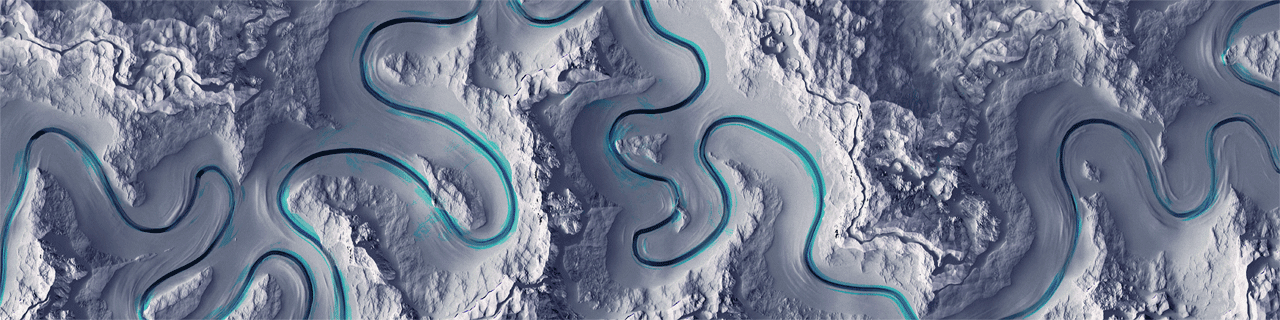

The audiovisual installation creates a bird’s eye view of a landscape. This orientation conceptualizes a new human perspective on space and time, in an attempt to decipher the unpredictable patterns. By experiencing it from an influential perspective, the real-time visuals demonstrate what is happening on a larger scale.

Based on a bespoke algorithm, the rippling and oscillating movement inherent in the generated imagery, provides a vantage point that transforms an understanding of progress, to examine the rhythm of natural forces. As the evolving patterns of »Meandering River« emerge, the viewer is left with a humbling sense of the unpredictability of change and the beauty of nature.

Research – Making Change visible

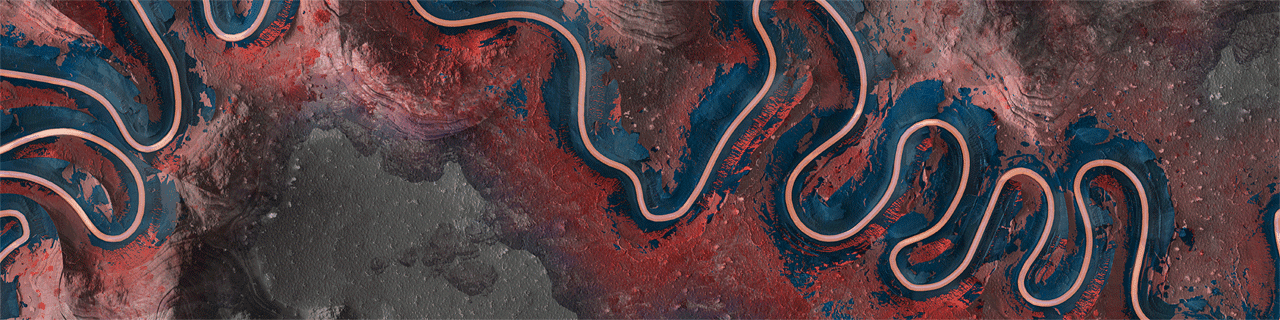

Over decades, flowing river structures shift as water erodes the ground, forms a riverbed, and carries soil deposits with it to other locations. The effect of depositing and eroding the landscape changes colour formations depending on the shade and quality of the ground.

By investigating academic and scientific research that examine this natural phenomenon, different algorithms were developed to authentically simulate the unpredictable movements of rivers and reinterpret their organic structures, rhythmic fluctuations and visual materiality.

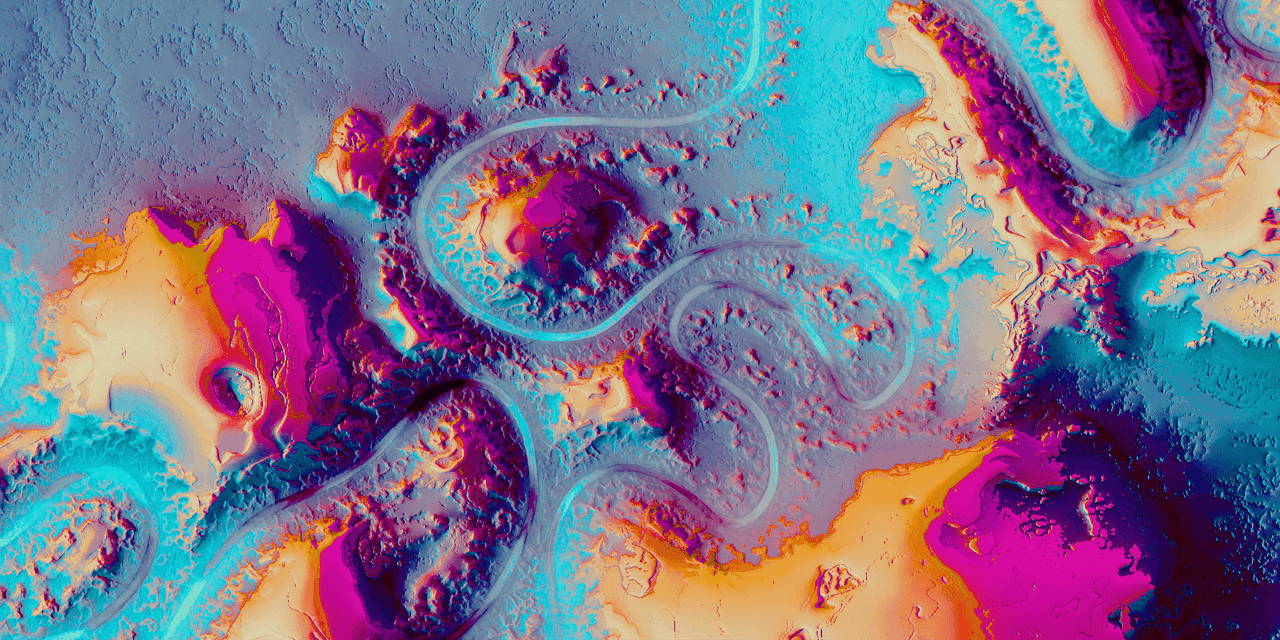

During the visual research and development process, a bespoke rendering pipeline was created, allowing an iterative visual exploration and composition. Various shaders and algorithms were applied in real-time as graphical layers to simulate natural appearances such as hydraulic and thermal erosion, oxbow lakes and meander scars. Additional post-processing passes like surface materiality, vegetation and lighting enabled the final landscape compositions to reach the visual granularity and quality intended for the work.

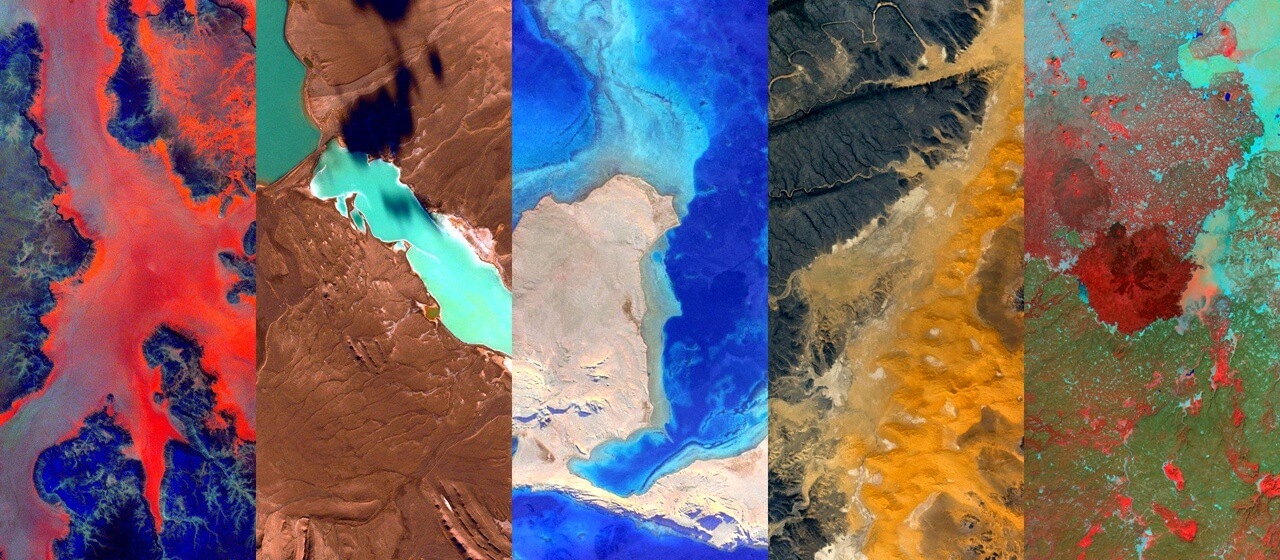

The diverse and painterly aesthetic of real satellite images of abstract landscapes served as visual inspiration for the look development of the final piece.

Accompanying Soundscape Composed By A.I.

The musical score was developed in collaboration with kling klang klong guided by the intention to enhance the emotional, complex and unpredictable nature of the visual aesthetic. Through a research of various computational strategies, the musical composition was created using the Google Magenta Performance RNN learning model and a custom-built procedural system.

To collect training datasets, piano players were invited to improvise musical phrases based on the diverse visual sceneries of the animation. The range of auditive interpretations they produced served as a large-scale polyphonic inventory for the Artificial Intelligence to learn from.

To reflect the performative nature of the work, values outputted directly from the river simulation such as the length, curvature and events of connections of the meandering river were analyzed and interpreted in real-time to influence musical parameters like tempo, note strength, intensity, pitch range.

As a result of an iterative process guided by kling klang klong’s valuation, the machine achieved the composition of an original musical score whose harmonic quality reached the standard expected from a human composer and a rich cinematic connection between the visuals and the music.

As a final outcome, the artwork consists of six different visual modes, which each are accompanied by a peculiar musical composition. The full video below presents the utmost balanced interplay of visuals and sound.

credits

- Creative Direction: Cedric Kiefer

- Production: Aurélien Krieger

- Code & Design: Henryk Wollik

- Research: João da Fonseca, Luca Lolli

- Audio Concept & Composition: kling klang klong

- Hardware Provider: ICT AG | Berlin

exhibitions

- Contemporary Istanbul, Istanbul, 2017

- Funkhaus, Berlin, 2018

- National Design & Craft Gallery, Kilkenny 2018

- STATE Studio, Berlin, 2019

- Google I/O, Mountain View, 2019

- AI for Good Global Summit, Human Rights Room, UN Geneva, 2019

- Ars Electronica STARTS Exhibition, Linz, 2019

- UN Art Center, Shanghai 2019

- Scopitone Festival, Nantes, 2019

- TASIES 2019 5th International Art and Science Exhibition and Symposium, Beijing, 2019

- NTAA 19 New Technological Art Award Exhibition, Ghent, 2019

- Design Society, Shenzen, 2019

- Mianki Gallery, Berlin, 2020

- PUZZLE, Thionville, 2020

- TLN UX 21 Festival des cultures hybrides, Toulon, 2021

- Future Media Art Festival, Taiwan, 2021

- Ekotisak Exhibition, Novi Sad, 2022

- Google Cloud Next, Zürich, 2022

- Echo Of Water, MOCA, Yinchuan, 2023

- Khroma, Berlin, 2024

awards

- Ars Electronica STARTS Prize, Honorary Mention, 2019

- Japan Media Festival, Jury Selection, 2021